Rendering accurate text has long been a stumbling block for even the most advanced AI image generators, but it’s among the strongest suits of Google’s just-updated Nano Banana 2 engine.

Available now in the Gemini app (you’ll also find it in Google Search, AI Studio, and other Google products), Nano Banana 2 boasts a range of new features, including up to 2K resolution that can be upscaled up to 4K, “enhanced” instruction following that helps the model adhere better to your prompts, and the ability to lean on Gemini’s “real-world” knowledge, allowing it to draw real-time information via web search as it renders images.

Not bad, but even more impressive is Nano Banana 2’s text fidelity. I’ve been asking Nano Banana 2 to create images with billboards, signs, newspapers, and other objects with embedded text, and it’s been performing like a champ, largely avoiding the gibberish that earlier AI image generators typically produced when trying to render letters and words.

For example, I prompted Nano Banana 2 to render an image of a robot smoking a cigarette in Times Square, with a neon marquee reading “Nano Banana 2 on Broadway” in the background. No problem, and it rendered the image (above) in roughly 10 seconds.

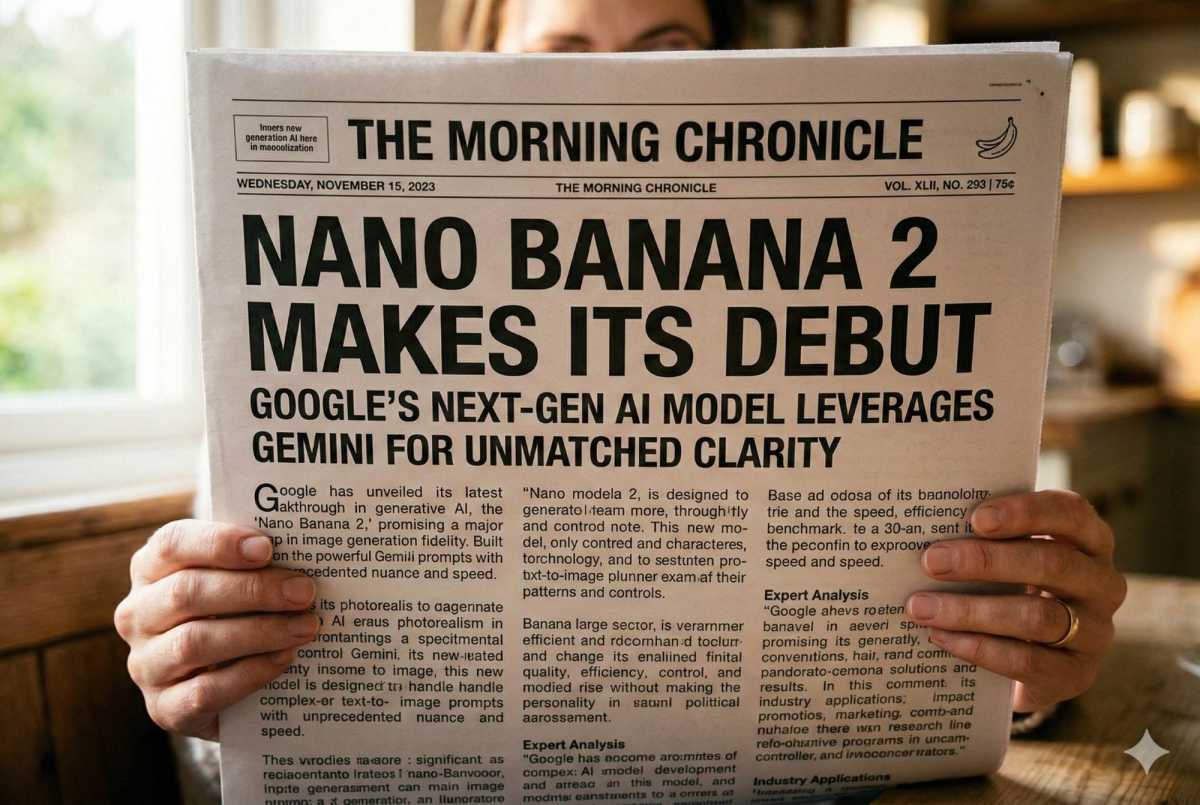

I then asked Nano Banana 2 to create a photo of a woman reading a newspaper in a breakfast nook, with the newspaper headline reading “Nano Banana 2 makes its debut.” But for this test, I upped the ante: I asked the engine to write the sub-headline and the article itself, and directed that the story should specifically be about Nano Banana 2.

Well, the model got the subheadline just right, but even better, it did write the article–up to a point, anyway. The article text is a tad wiggly, but you can almost read it.

I then pushed Nano Banana 2 a little more, asking it to zoom in on the article and enhance the text.

Here, the text rendering broke down a bit, “Google has unveiled its latest akthrough [sic] in generative AI, the ‘Nano Banana 2’,” the article reads, “promising a major leap [the word “leap” is partially obscured by a finger] in image generation fidelity.” Not bad, but as you keep reading, the text fidelity does starts to crumble.

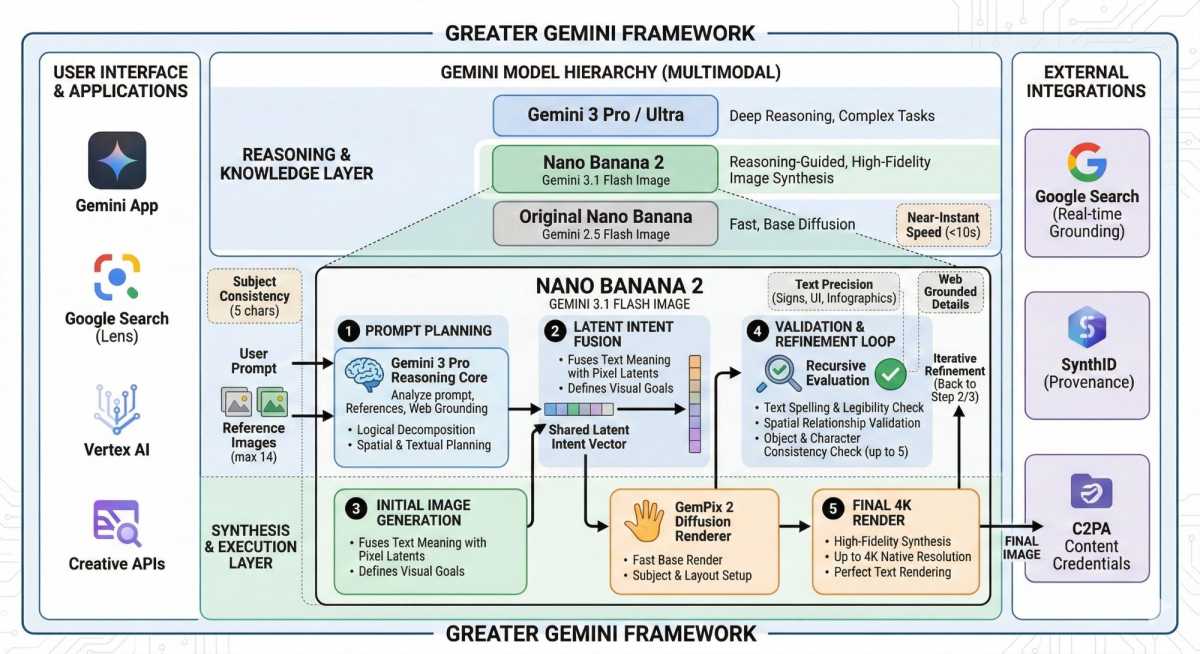

Finally, I tried asking Nano Banana 2 to draw a diagram of–well, itself. “Render a diagram of nano banana 2’s architecture within the greater Gemini framework, complete with text captions,” I prompted, and about 15 seconds later I got this:

Looking closely at the diagram, I didn’t see any text gibberish at all, and the diagram and captions seemed to make sense, or at least it did to my untrained eye.

Plugging the diagram into the Gemini app, the “thinking” version of Gemini assured me it was a “remarkably accurate architectural map” of the overall Gemini framework, accurately depicting how the new model can handle up to five consistent characters within an image workflow. It also correctly referenced the brand-new GemPix 2 Diffusion Renderer, the Nano Banana 2 component that takes the engine’s native 2K image renders and upscales them to 4K.

All in all, very impressive, although Nano Banana 2 also begs the question of when OpenAI will counter with a follow-up to last year’s GPT Image 1.5. That could happen any day now, if not today.

This articles is written by : Nermeen Nabil Khear Abdelmalak

All rights reserved to : USAGOLDMIES . www.usagoldmines.com

You can Enjoy surfing our website categories and read more content in many fields you may like .

Why USAGoldMines ?

USAGoldMines is a comprehensive website offering the latest in financial, crypto, and technical news. With specialized sections for each category, it provides readers with up-to-date market insights, investment trends, and technological advancements, making it a valuable resource for investors and enthusiasts in the fast-paced financial world.